Intel Xeon 6 Enters Nvidia DGX Rubin Architecture, Why Shares Fell After 7% Surge?

Intel's Xeon 6 processors will power NVIDIA's DGX Rubin NVL8 AI server, marking a strategic return for CPUs in AI architecture, particularly for real-time inference. This collaboration highlights the x86 architecture's continued relevance for task orchestration and data management, bridging CPUs and GPUs. While Intel's stock saw initial volatility, the partnership signifies a potential shift towards heterogeneous AI systems. Xeon 6's advantages include extensive memory support, increased bandwidth, PCIe 5.0 connectivity, and security features, crucial for accelerating AI inference workloads. This integration into the NVL8 system is viewed as a foundational step for future broader deployments.

TradingKey - At the 2026 NVIDIA GTC conference, Intel ( INTC) announced that its Xeon 6 processors will officially serve as the primary CPU for NVIDIA's ( NVDA) next-generation flagship AI server, the DGX Rubin NVL8.

This collaboration not only extends the x86 architecture synergy established on the Blackwell platform but also highlights the strategic return of CPUs as core components in AI system architectures at a critical juncture, as AI workloads transition from large-scale training to "ubiquitous real-time inference."

In NVIDIA's DGX Rubin NVL8—a flagship server designed specifically for Agentic AI and inference systems—Xeon 6 processors handle core responsibilities such as task orchestration, memory management, scheduling, and data transfer to GPU accelerators, serving as a vital bridge between computing power and applications.

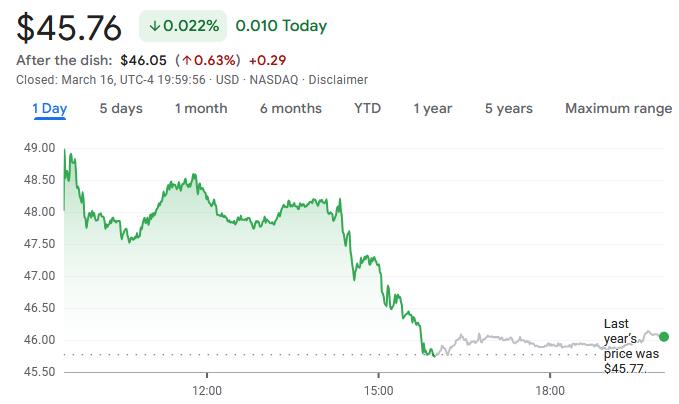

Upon the news of the partnership, Intel's stock price surged as much as 7.4% on Monday, hitting an intraday high of $49.17, before closing down slightly by 0.02% at $45.76, making it one of the worst-performing components of the Philadelphia Semiconductor Index that day.

This "pop and drop" price action reflects the market's ambivalent attitude toward the AI transition of traditional CPU manufacturers. While investors recognize the strategic significance of the Intel-NVIDIA partnership as a key step for Intel to gain a foothold in the AI server space, they remain cautious about the profitability of its AI business in a GPU-dominated market, requiring clearer earnings guidance to boost confidence further.

However, research firm Lynx Equity Strategies maintains a positive outlook, noting in an analyst report that the integration of Intel Xeon 6 into the NVIDIA DGX Rubin NVL8 system marks the transition of the partnership from technical concept to commercial product. Moving forward, the commercial value of this collaboration can be tracked through product launch timelines and potential revenue contributions.

CPUs Return to Strategic High Ground

From an industry landscape perspective, the significance of this Intel-NVIDIA partnership extends far beyond a simple component supply relationship.

For Intel, this is a precise "strategic defensive" move that breaks the industry bias that "CPUs are irrelevant in the AI era," proving that in the age of inference, CPU scheduling, memory management, and security features are essential supports for the efficient operation of AI systems.

For the broader AI industry, this validates the deep moat of the x86 architecture within the AI ecosystem; its massive install base and mature software ecosystem can effectively lower technical barriers and Total Cost of Ownership (TCO) for enterprise AI transitions. More importantly, it signals that AI infrastructure is moving toward an "architectural rebalancing," where future AI systems will no longer be a solo act for GPUs but rather heterogeneous architectures with multi-chip coordination across CPUs, GPUs, and DPUs.

Notably, Intel's Xeon 6 is integrated into NVIDIA's DGX Rubin NVL8 rack system rather than the larger-scale NVL72 rack. This continues the partnership logic seen with the Blackwell platform—positioning Xeon processors as host processors in basic 8-GPU nodes, responsible for data preprocessing, model loading, KV cache management, and task coordination.

Industry observers generally believe this is only the starting point of the partnership. As AI inference demand continues to grow, Xeon processors are expected to be further integrated into hyperscale rack systems like the NVL72.

Technical Advantages of Intel Xeon 6

Intel stated that the Xeon series' ability to become the core CPU of the DGX Rubin NVL8 system stems from its multi-dimensional system-level advantages—ranging from high-speed hardware memory support and balanced performance across diverse workloads to long-term cost control capabilities and a market-proven, mature enterprise software ecosystem.

Addressing the growing demand for large model KV (Key-Value) caching in inference scenarios, the Xeon 6 platform supports up to 8TB of system memory, a capacity crucial for running large language models and storing critical key-value data.

Simultaneously, by adopting MRDIMM technology, Xeon 6 achieves a 2.3x memory bandwidth increase over the previous generation, reaching up to 8800 MT/s. This breakthrough significantly accelerates data transfer to GPU accelerators, addressing the common GPU data supply bottleneck in inference scenarios at its source.

In terms of system connectivity, Xeon 6 offers PCIe 5.0 lanes that enable high-bandwidth accelerator connections, ensuring efficient data flow across multi-GPU clusters.

Intel's innovative "Priority Core Turbo" feature is specifically designed for complex scheduling requirements in inference scenarios. It concentrates strong single-threaded performance on orchestration, scheduling, and data transfer tasks, ensuring that GPUs maintain high efficiency even under complex multitasking workloads.

Regarding security, Xeon 6 processors utilize Intel Trust Domain Extensions (TDX) technology to provide end-to-end protection for the entire data path from CPU to GPU. Its encrypted bounce buffers add hardware-based isolation and authentication, perfectly suiting confidential computing requirements for AI inference across data center, cloud, and edge deployments.

Furthermore, Xeon 6 adds support for the NVIDIA Dynamo inference orchestration framework. With this framework, CPU and GPU resources within the same cluster can achieve flexible heterogeneous scheduling, further enhancing overall system efficiency.

This content was translated using AI and reviewed for clarity. It is for informational purposes only.

Recommended Articles