Anthropic Strikes $100 Billion Computing Deal With Amazon in Drive to Rival OpenAI

Amazon announced a $5 billion investment in Anthropic, with potential to reach $25 billion. Anthropic committed to over $100 billion in AWS computing resources and AI chip capacity over ten years. This partnership addresses Anthropic's computing power shortages, which have impacted service reliability and customer retention. The deal enhances Anthropic's infrastructure using AWS's Trainium2 and Trainium3 chips, aiming for a cost advantage over competitors like OpenAI, which relies heavily on expensive Nvidia GPUs. This move positions Anthropic to capitalize on its recent revenue growth and potentially surpass OpenAI.

TradingKey - On Monday, Amazon (AMZN) announced an additional $5 billion investment in Anthropic; in exchange, Anthropic committed to purchasing over $100 billion in computing resources from Amazon Web Services (AWS) over the next decade and will utilize up to 5 gigawatts of Amazon AI chip capacity for the training and inference of Claude models.

Furthermore, if the commercial cooperation between the two parties reaches specific milestones, Amazon's total investment could reach as much as $25 billion.

Due to a shortage of computing power, Claude services have recently experienced persistent downtime, rate limiting, and performance degradation, even causing some customers to switch to other AI platforms and putting business pressure on Anthropic. This agreement can be seen as Anthropic's response to the computing power shortage.

Earlier this month, Anthropic announced that its annualized revenue surpassed $30 billion, exceeding OpenAI's $25 billion during the same period, which is seen as a signal that Anthropic has officially overtaken OpenAI. Having resolved its computing power crisis, will Anthropic capitalize on its momentum to definitively defeat OpenAI?

AWS Alliance Supercharges Capacity

In addition to the $25 billion mentioned in this agreement, Amazon has invested $8 billion in the company in recent years, making AWS its primary cloud service provider.

Anthropic stated that additional computing power will come online over the next three months, with total capacity deployment expected to reach nearly 1 gigawatt by the end of 2026, primarily utilizing AWS’s proprietary Trainium2 and Trainium3 chips. Specifically, large-scale capacity for Trainium2 will be released in the second quarter of this year, Trainium3 capacity is expected to be deployed later this year, and subsequent chip generations such as Trainium4 have also been included in the procurement options.

Anthropic has currently deployed over 1 million Trainium2 chips at the Project Rainier data center. This data center, owned by Amazon, is one of the world's largest AI computing clusters, but the aforementioned 1 million-plus chips represent computing power reserved exclusively for Anthropic.

In addition to AWS, Anthropic also has partnership agreements with other cloud service providers, such as Microsoft (MSFT) Azure and Google Cloud (GOOG) (GOOGL) . In October last year, Anthropic signed an agreement with Google worth tens of billions of dollars, granting it access to as many as 1 million of Google's proprietary TPU chips and supporting over 1 gigawatt of computing capacity.

Anthropic stated that demand for Claude from enterprises, developers, and consumers alike has accelerated significantly this year across various subscription tiers, including the Free, Pro, and Max versions; peak demand has impacted service reliability, which is why Anthropic continues to expand its computing power support.

Closing the Compute Gap

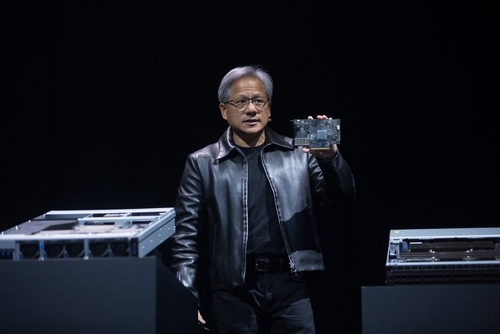

Backed by Microsoft, OpenAI has historically outpaced Anthropic in terms of computing reserves. In contrast, OpenAI's training costs are projected to reach $3 billion in 2024, with inference costs at $4 billion. Anthropic, however, is expected to lose approximately $2 billion in 2024 on revenue of just a few hundred million dollars. According to analysis, given that Anthropic's inference costs are likely lower, the majority of this $2 billion is likely spent on model training; conservative estimates place its training costs at $1.5 billion, just half of OpenAI's. Training costs are almost synonymous with computing power consumption.

Based on Microsoft's procurement of Nvidia (NVDA) H100 chips over the last few years—roughly 150,000 units in 2023 and nearly 500,000 in 2024; meanwhile, Anthropic has already deployed 1 million Trainium2 chips via Project Rainier. Notably, Microsoft does not allocate all its computing power to OpenAI. The partnership with Amazon has significantly boosted Anthropic's computing resources.

Through its collaboration with Amazon, Anthropic will not only address its computing deficit but also gain a more distinct advantage over OpenAI. Despite signing major computing deals with Oracle, OpenAI remains highly dependent on Nvidia GPUs, which are expensive to rent and offer little room for price negotiation. Through its deep cooperation with Amazon, Anthropic benefits from proprietary, lower-cost Trainium2 and Trainium3 chips, which are expected to further compress unit training and inference costs. This means Anthropic can provide superior API performance with a price advantage.

Compared to the highly sought-after Nvidia chips, Anthropic's adoption of Amazon's proprietary silicon also offers the potential for faster deployment of new computing capacity, enabling it to iterate its models and capture market share ahead of OpenAI.

This content was translated using AI and reviewed for clarity. It is for informational purposes only.

Recommended Articles

Comments (0)

Click the $ button, enter the symbol, and select to link a stock, ETF, or other ticker.