From Computing Power to Efficiency: OpenAI VS Claude Code

OpenAI secured $122 billion in funding, showcasing capital enthusiasm for AI infrastructure, yet the memory spot market plunged, signaling a shift from computing power acquisition to efficiency. OpenAI leads with a $852 billion valuation, a massive user base, and broad technical capabilities, while Anthropic challenges in the enterprise market with deep expertise in programming. The AI industry is transitioning to refined operations, emphasizing efficiency over scale, with OpenAI maintaining its dominant position despite ongoing challenges in enterprise adoption and occasional technical limitations.

TradingKey — On the first day of April 2026, the AI industry witnessed a dramatic juxtaposition: while OpenAI closed the largest funding round in Silicon Valley history amid soaring capital enthusiasm, the memory spot market suffered a "cliff-like" plunge, casting a sudden chill over the sector. These seemingly contradictory phenomena actually point to the same underlying trend—the AI infrastructure race is pivoting from the brute-force stacking of computing power toward a more refined efficiency revolution.

Amidst this profound industrial transformation, the "same-origin" rivalry between OpenAI and Anthropic has emerged as the definitive case study for tracking the evolution of the AI industry landscape.

I. Capital Signals: The Dual Narrative Behind $122 Billion

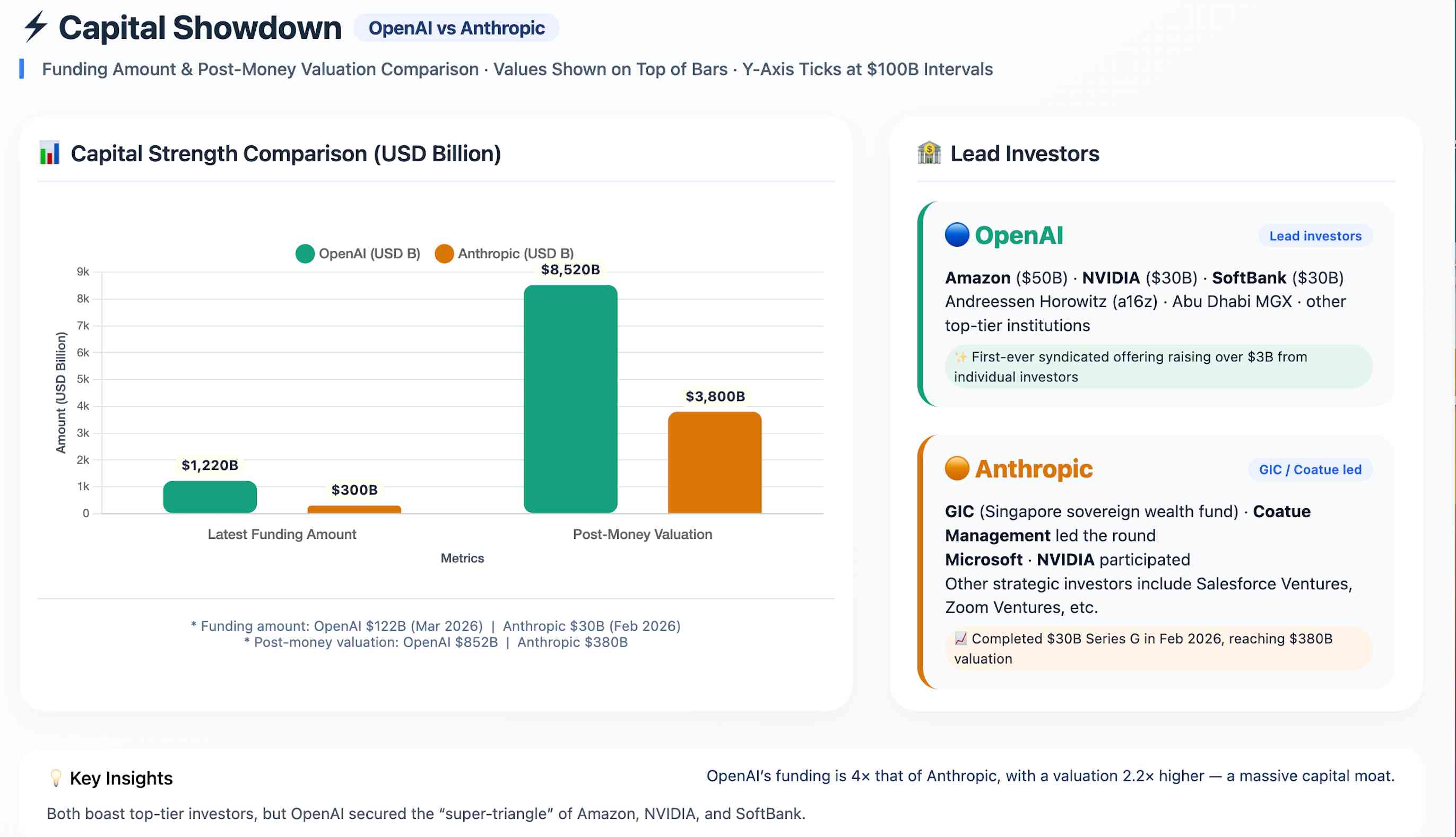

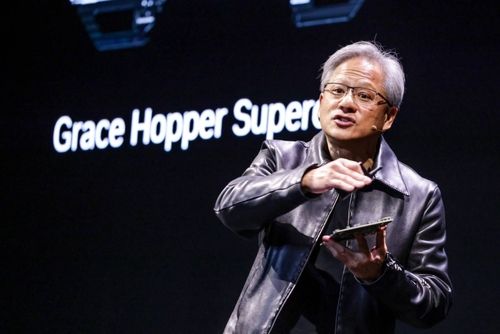

On March 31, OpenAI announced the completion of a financing deal totaling $122 billion, with the company's valuation soaring to $852 billion, setting a new record in Silicon Valley history. The investor lineup is prestigious: Amazon ( AMZN) committed $50 billion, while Nvidia ( NVDA) and SoftBank each contributed $30 billion, with top-tier institutions such as Andreessen Horowitz and Abu Dhabi's MGX also joining the round. Notably, OpenAI raised over $3 billion from individual investors through banking channels for the first time, further diversifying its funding sources.

Capital markets reacted swiftly: on the same day, the Nasdaq index rose 3.83%, while Nvidia and Google ( GOOGL) rose by over 5%, and Meta ( META) climbed by more than 6%. Capital is confirming a key thesis: the AI infrastructure build-out cycle is far from over, and the industry remains in a phase of high capital expenditure.

However, the larger the financing, the greater the challenges. Amazon's $35 billion investment is directly linked to OpenAI's future IPO timeline. Although OpenAI's monthly revenue currently reaches $2 billion, with enterprise clients accounting for over 40% of total income, substantial losses continue to cast a shadow over its long-term operations. More importantly, this massive round of funding needs to prove not just 'scale', but 'efficiency'。

Comparing OpenAI's financing with that of Anthropic provides a clearer view of the capital landscape:

OpenAI's financing scale is more than four times that of Anthropic, with its valuation being 2.2 times higher. This overwhelming capital advantage provides OpenAI with stronger computing power procurement capabilities, superior talent compensation competitiveness, and more ample room for strategic trial and error. In the 'cash-burning' game of AI, capital advantage is the most fundamental competitive barrier.

II. Strategic Adjustment: From Extensive Expansion to Refined Positioning

Behind the financing news, OpenAI is adjusting the pace of its global infrastructure expansion.

According to the Star Market Daily, OpenAI and Oracle ( ORCL )'s co-developed Abilene campus in Texas has reached a scale of 1.2 GW for its first phase. Latest adjustments show that OpenAI is transferring 700 MW of Phase II capacity to its partner Microsoft ( MSFT ), shifting its resource layout from single-point expansion to diversified configuration. While some media outlets have interpreted this as a signal that OpenAI is cutting costs, a more reasonable explanation is that OpenAI is moving from extensive expansion toward refined deployment.

Meanwhile, OpenAI is deepening supply chain cooperation with Samsung and SK Hynix. SK Hynix will establish a production system to meet a monthly demand of up to 900,000 HBM wafers—equivalent to more than double the global HBM capacity; Samsung will participate in the construction of the "Stargate Korea" data center in South Korea.

This strategy of "scaling back expansion in certain regions while deepening core supply chain ties" reflects OpenAI's construction of a resilient computing network: through multi-party cooperation with Amazon, NVIDIA, and Microsoft, it breaks dependency on a single supplier and gains greater bargaining power and cost control in computing procurement.

This echoes shifts in the memory market, where the spot market has been "across-the-board in the red" since mid-March. Prices for mainstream DDR4 memory modules have led the decline, with 8GB and 16GB products falling by as much as 25%. In business districts like Shenzhen's Huaqiangbei and Hangzhou's Buynow, some wholesale prices have even dropped below factory prices. The sudden price drop is driven by both the cooling of demand due to Google's TurboQuant compression algorithm and the amplification of channel pressure.

Notably, price reductions in the memory market show a clear divergence: consumer-grade DDR4 is in a freefall, while enterprise-grade and AI-specific memory modules maintain high-level volatility as cloud vendors locked in supplies early. This divergence confirms that the AI industry is transitioning from "stacking hardware" to improving efficiency.

III. User Scale: Consumer Dominance and Enterprise Challenges

User base size is a direct indicator of AI product market penetration and the bedrock for commercialization.

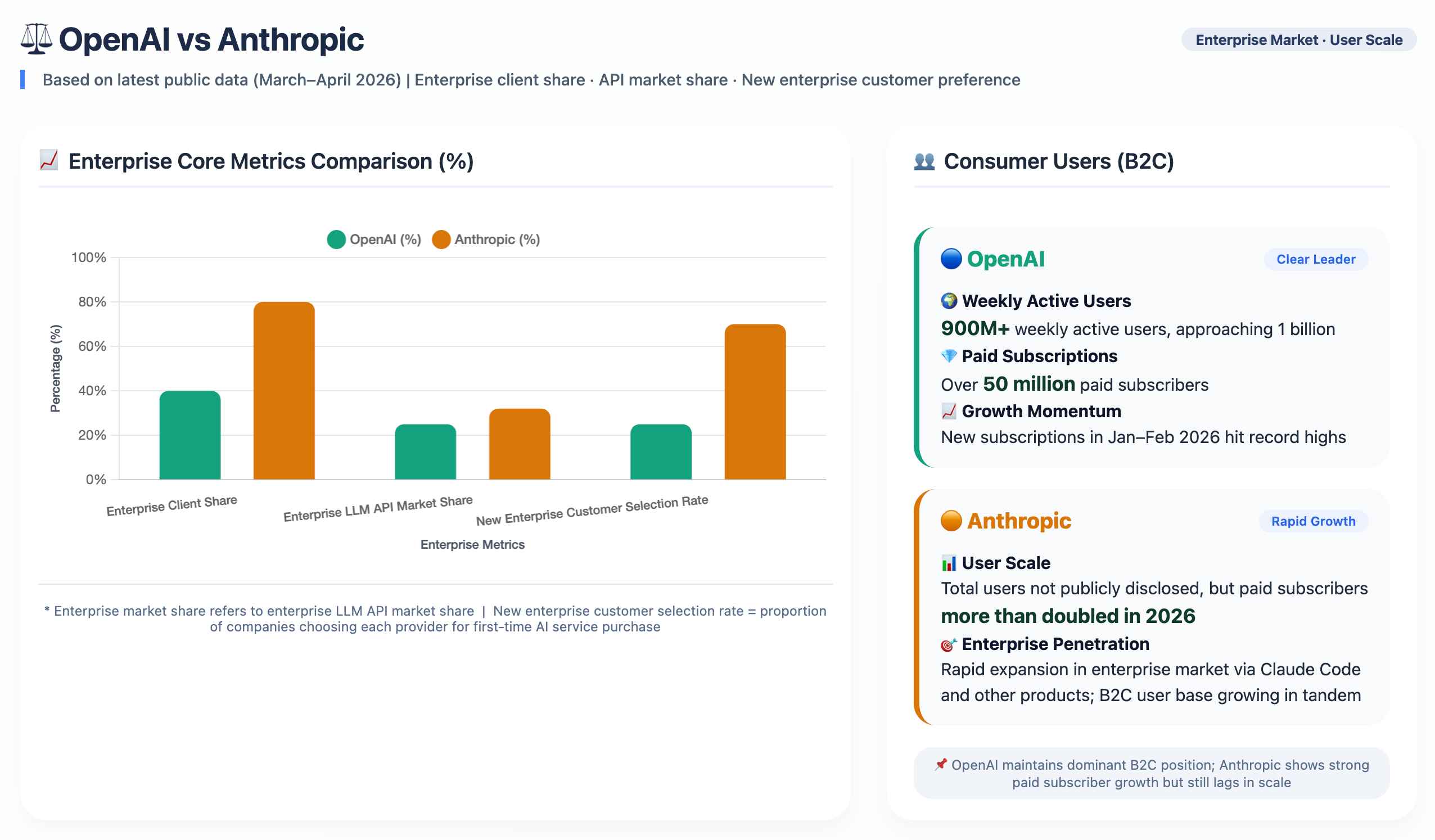

In the consumer market, OpenAI holds an undisputed dominant position, with ChatGPT's weekly active users surpassing 900 million and nearing the 1 billion milestone, while paid subscribers exceed 50 million. This user base is currently out of Anthropic's reach. Furthermore, OpenAI's consumer advantage is reflected not only in volume but also in growth momentum; since early 2026, subscription growth has significantly accelerated, with new subscriber numbers in January and February hitting record monthly highs.

However, in the enterprise market, OpenAI is facing a formidable challenge from Anthropic. Anthropic's enterprise customer share stands as high as 80%, compared to OpenAI's 40%. In the enterprise LLM API market, Anthropic has overtaken OpenAI with a 32% share against 25%. Even more concerning is that among enterprises purchasing AI services for the first time, the selection rate for Anthropic is already triple that of OpenAI.

Faced with this situation, OpenAI has sounded a 'red alert.' In March 2026, Fidji Simo, head of the applications business, explicitly labeled Anthropic's lead in the enterprise market as a 'red alert' during an all-hands meeting and announced the consolidation of ChatGPT, Codex, and the Atlas browser into a unified desktop super-app, refocusing on coding and enterprise clients.

IV. Product Competitiveness: Respective Strengths and the Dominance of Two Giants

Product capabilities represent the ultimate decisive battlefield, where OpenAI and Anthropic have chosen starkly different directions for their product roadmaps.

The product competitiveness of OpenAI and Anthropic reflects two distinct strategic paths: OpenAI builds its ecosystem through "breadth," while Anthropic establishes moats through "depth."

OpenAI's advantage lies in a dual-engine drive: first, the breadth of its technical capabilities, and second, the depth of its specialized expertise.

Regarding breadth, OpenAI has built a comprehensive product matrix spanning from conversation (ChatGPT) to programming (Codex). While this full-stack layout carries the risk of strategic dilution, it preserves more possibilities for exploring next-generation AI application formats.

In terms of depth, GPT-5.4, released in March 2026, demonstrated powerful professional capabilities. The new model achieved a 75% success rate in OSWorld-Verified testing, surpassing the human performance of 72.4%. Meanwhile, model error rates have been significantly optimized, with per-statement error rates falling by 33% and the probability of errors in full responses decreasing by 18%. These advancements indicate that while maintaining leadership in general-purpose capabilities, OpenAI is accelerating its catch-up in professional-grade segments.

Anthropic, conversely, has opted for a distinctly different route: focusing its entire firepower on a single segment—programming.

Claude Code has emerged as a mainstream tool in corporate programming, reaching $2.5 billion in annualized revenue, with 4% of global public GitHub code commits generated automatically by the tool. Eight of the Fortune 10 companies are Claude customers. This ‘narrow and deep’ strategy has enabled it to derive 80% of its revenue from the enterprise market, maintaining a 92% renewal rate among major clients.

Notably, on March 31, Claude Code accidentally leaked approximately 512,000 lines of TypeScript source code involving over 1,900 files due to an npm package packaging error. This ‘viral’ incident inadvertently exposed several unreleased features: the Kairos persistent daemon (enabling ‘always-on’ agents), the Buddy System pet-raising Easter egg, and Undercover Mode (for erasing traces of AI generation), with related posts surpassing 21 million views.

On the same day, Anthropic announced the formal integration of Computer Use into Claude Code, enabling it not only to read and write code but also to directly operate computer interfaces. Complemented by the Channels feature (which pushes external messages to Claude sessions), Claude Code has fully evolved into a long-running autonomous agent system.

However, rapid expansion has brought hidden liabilities, with two security incidents occurring in a single week (including a prior leak of nearly 3,000 internal documents via a third-party CMS), which, compounded by the exposure of engineering risks, has sounded an alarm for Anthropic. Simultaneously, the era of free AI is receding—monthly bills for high-frequency Claude Code users have reached $150,000, and Google has also scaled back the free tiers for its Gemini CLI.

V. Global Outlook: AI Infrastructure Enters the "Era of Efficiency"

Through the analysis of capital movements, strategic adjustments, and market dynamics, a more comprehensive industrial landscape is gradually coming into focus:

1. Evolution from Scale to Efficiency

OpenAI's massive financing and the decline in memory spot prices are not contradictory. The former reflects capital's confidence in the long-term prospects of AI, while the latter represents short-term dynamic adjustments triggered by technological progress. Google's TurboQuant compression algorithm illustrates that the AI industry is transitioning from "hardware stacking" toward efficiency optimization.

2. Intensification of the Divergent Landscape

Enterprise-grade memory modules and AI-specific products continue to maintain high demand and high added value, whereas the low-end consumer market and intermediary channels face significant pressure to clear inventory. Manufacturers that continuously innovate remain in a dominant position, while those overly dependent on basic products will be increasingly marginalized.

3. A Competitive Landscape of Two Giants Standing Side-by-Side

The current competitive landscape of the AI industry can be summarized as "two giants standing side-by-side, with OpenAI still leading the pack." Anthropic has established deep moats in the enterprise programming market and has become a formidable challenger with staggering revenue growth. However, OpenAI still maintains significant advantages in core dimensions such as capital scale, consumer base, technological breadth, and brand recognition.

4. The Real Test After Technological Breakthroughs

Despite an influx of $122 billion in funding, OpenAI still faces the unresolved "hallucination problem": generative models may still be "confidently wrong" in complex logical processing, requiring substantial human verification. Frontier technological breakthroughs still require time to realize true commercial value conversion. As one industry observer put it: "The performance gap among current top-tier global large models is small, with each having its own strengths; there are currently no absolute technical monopoly barriers that are difficult to overcome in the short term."

Conclusion: Computing Power Race Yields to Efficiency Revolution; OpenAI Still Leads the Pack.

The start of April 2026 marks a new stage for the AI industry, transitioning from "unbridled growth" to "refined operations." Whether it is OpenAI's record-breaking financing, the realignment of computing power layouts, or the sudden plunge in memory prices, these changes collectively point to a core judgment: the acquisition and allocation of computing resources are shifting from a race for scale to a race for efficiency.

In this profound industry shift, OpenAI remains the undisputed leader of the AI sector, bolstered by a $122 billion capital war chest, a traffic moat of 900 million users, and the technical depth of GPT-5.4. While Anthropic’s pursuit is formidable, OpenAI’s dominance is unlikely to be shaken in the short term.

For investors, high-valuation logic is trending toward rationality; for the industry, the deep integration of core technology with supply chains is becoming critical; and for consumers, the short-term decline in memory prices has created opportunities for hardware upgrades, but the true long-term trend still depends on whether innovation can continue to advance. Between the tides of capital and the iteration of technology, whoever can ultimately transform computing power advantages into efficiency advantages will emerge victorious in the next stage of competition.

Recommended Articles

Comments (0)

Click the $ button, enter the symbol, and select to link a stock, ETF, or other ticker.