Google’s “Compression Revolution” Impacts AI Computing Logic: Has Storage Demand Peaked?

Google's TurboQuant AI memory compression technology has caused a sharp decline in the U.S. storage chip sector, with stocks like SanDisk, Micron, Western Digital, and Seagate experiencing intraday drops. While interpreted as a bearish signal for storage demand due to its potential to reduce memory footprint and speed up AI inference, the technology primarily targets inference, not high-bandwidth memory crucial for training. This suggests AI infrastructure demand remains robust. Efficiency gains could lower AI commercialization costs, potentially expanding application scenarios and increasing overall computing power demand. The current pullback reflects a re-pricing in a high-valuation environment, with AI demand diffusion continuing, potentially evolving into a demand restructuring.

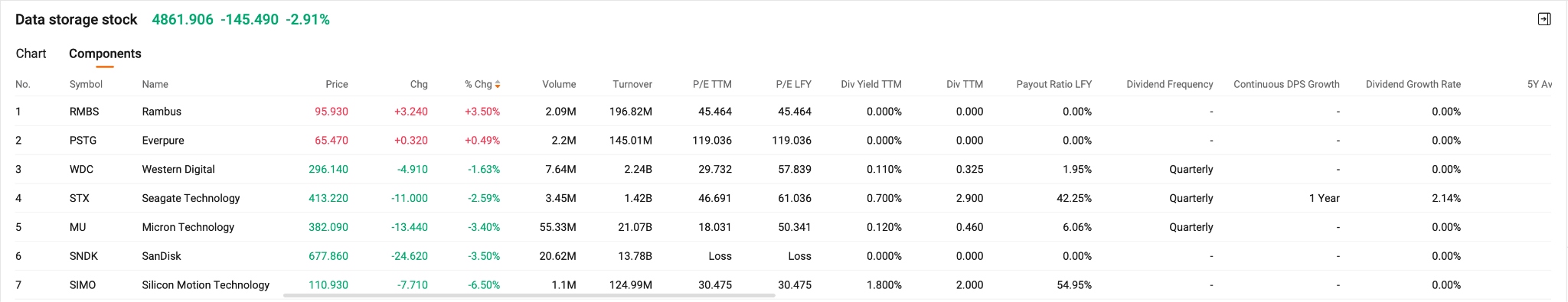

TradingKey - Google (GOOGL) The release of TurboQuant, a new AI memory compression technology, has sparked market concerns over the outlook for storage demand. Impacted by the news, the U.S. storage chip sector suffered a sharp intraday decline on Wednesday, SanDisk (SNDK) at one point fell 6.5%, Micron Technology (MU) at one point fell over 5%, Western Digital (WDC) at one point fell over 6%, Seagate Technology (STX) dropped over 8%.

In the AI-driven market over the past year, the storage sector has benefited from rising prices for HBM, DRAM, and NAND, pushing valuations to relatively high levels; consequently, any variable potentially weakening demand growth is quickly priced in.

Reportedly, the technology can reduce the cache memory footprint of large language models (LLMs) by at least sixfold without compromising accuracy while achieving up to an eightfold speedup, aiming to address memory bottlenecks in AI inference and vector search.

The core of TurboQuant lies in the extreme compression of memory usage during the inference stage of large models. Without significant loss in model precision, it can compress KV Cache to 3 bits, achieving approximately sixfold memory savings and up to an eightfold improvement in inference performance.

In essence, this breakthrough does not undermine AI demand; rather, it significantly improves the efficiency of unit computing power, allowing the same hardware resources to handle more inference tasks.

Market pricing indicates that this technology has been interpreted as a "bearish signal for storage."

However, based on current information, the technology primarily targets the inference phase and does not affect the rigid dependence on high-bandwidth memory on the training side, nor can it replace the central role of large-scale computing clusters in model training. This implies that the foundation for AI infrastructure demand remains solid, even as resource utilization methods evolve.

Looking further, such efficiency gains may actually result in "demand expansion." As inference costs drop significantly and the commercialization threshold for AI applications lowers, more enterprises and developers will be able to deploy large model services, leading to increased usage frequency. In this process, overall computing power consumption may not necessarily decrease; instead, it could rise due to the expansion of application scenarios. In other words, efficiency improvements can stimulate total demand growth under certain conditions.

The current pullback in the storage sector appears to be a re-pricing of expectations in a high-valuation environment rather than a weakening of fundamentals; in the long term, the trend of AI demand diffusion remains unchanged and may even be further strengthened by falling costs.

The impact brought by Google's TurboQuant is essentially not a "disappearance of demand," but a "restructuring of demand." Investors need to focus on which storage companies can maintain their original pricing power within the new industrial structure as AI efficiency continues to improve.

This content was translated using AI and reviewed for clarity. It is for informational purposes only.

Recommended Articles