Nvidia GTC 2026 Preview: Two Major Architectures Launch Together, Can They Solve the AI Anxiety Dilemma?

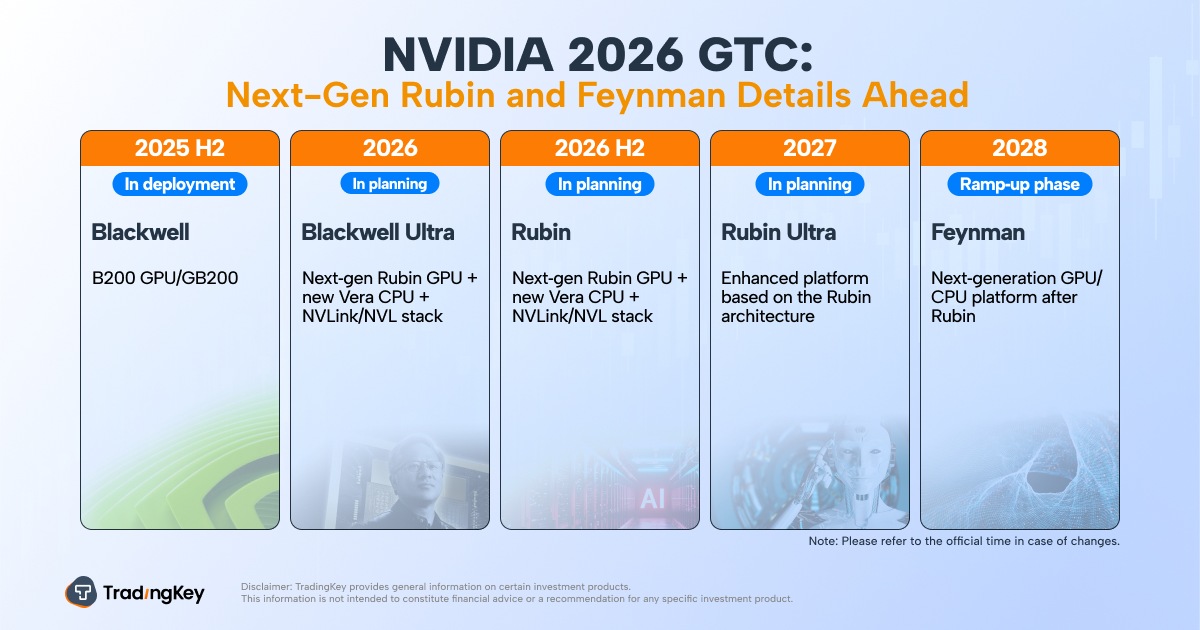

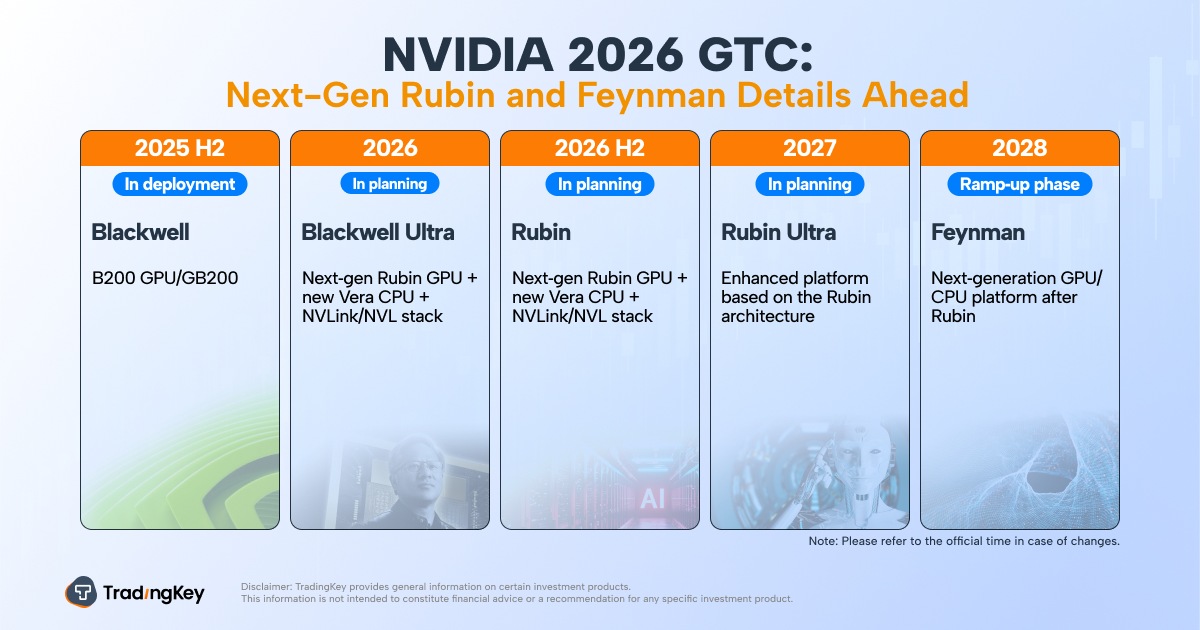

NVIDIA's GTC 2026 will unveil next-gen GPUs like Rubin, featuring HBM4 memory and a new Vera CPU, promising significant inference and training performance gains. The platform aims to lower AI adoption barriers. Potential early samples of the 2028 Feynman architecture, possibly using TSMC's 1.6nm A16 process with silicon photonics, may also be showcased. Market sentiment for AI is cautious, with investors seeking near-term profitability. NVIDIA's GTC performance is crucial for solidifying its leadership and influencing broader AI industry confidence.

TradingKey - As the most high-profile annual event in the global AI computing power sector, NVIDIA's ( NVDA) GTC 2026 conference will kick off from March 16 to 19.

Dubbed the "Super Bowl of AI," this industry feast will not only officially unveil core technical parameters of next-generation GPUs such as Rubin and "Feynman," but also showcase the latest technological breakthroughs and commercialization progress in computing infrastructure, including CPO switches, new power architectures, and high-efficiency liquid cooling.

Jensen Huang, founder and CEO of NVIDIA, stated: "GTC is the central hub of the industrial AI era. AI is no longer a single technological breakthrough or application scenario, but an indispensable infrastructure driving the development of various industries. Future, every company will embrace AI technology, and every nation will build AI infrastructure. From energy supply and chip manufacturing to data center construction, AI model development, and industrial applications, every layer of the AI tech stack is advancing in synergy, all of which will be fully showcased at the GTC conference."

Currently, the mass production progress of Blackwell Ultra chips, the official launch of the next-generation "Rubin" architecture, and evolving geopolitical trade rules are collectively creating a highly complex business environment for this global AI computing giant.

Investors and industry observers are closely monitoring the developments of this GTC conference, expecting to see whether NVIDIA can further solidify its leadership in the AI computing sector by releasing breakthrough technological achievements.

The Performance Revolution of the Vera Rubin Architecture

As a core milestone in NVIDIA's AI technology evolution roadmap, the official debut of the Vera Rubin architecture is one of the key focuses.

Unlike the previous Blackwell architecture, Vera Rubin will feature NVIDIA's proprietary Vera CPU, replacing the current Grace CPU, and will be paired with sixth-generation High Bandwidth Memory (HBM4), achieving a comprehensive upgrade from computing cores to memory architecture.

According to officially disclosed technical parameters, the flagship Vera Rubin model, the VR200 NVL72, is expected to deliver 3.3 times the overall inference performance of the Blackwell Ultra GB300 NVL72; its HBM4 memory bandwidth requirement exceeds 3.0 TB/s with operating speeds reaching over 11 Gbps—a core specification 30% higher than comparable products from AMD.

Meanwhile, the Rubin platform introduces five core innovative technologies, including next-generation NVIDIA NVLink interconnect technology, an upgraded Transformer Engine, confidential computing modules, a RAS reliability engine, and the proprietary NVIDIA Vera CPU.

These technological breakthroughs will bring significant performance gains. The token cost for Agentic AI, advanced reasoning, and hyper-scale Mixture-of-Experts (MoE) model inference will drop to one-tenth of that of the NVIDIA Blackwell platform; meanwhile, in MoE model training, the required number of GPUs will be only one-quarter of the previous generation, significantly lowering the barrier to AI adoption and accelerating its accessibility and penetration.

In fact, NVIDIA founder Jensen Huang revealed as early as the CES exhibition in Las Vegas on January 5, 2026, that Vera Rubin had entered full-scale mass production.

The entire Rubin platform consists of six brand-new chips specifically designed for building hyper-scale AI supercomputers, with the core objective of helping enterprises build, deploy, and securely run the world's largest and most advanced AI systems at the lowest total cost of ownership, accelerating the widespread application of mainstream AI technology across industries.

Huang stated at the time: "The computing demand for current AI training and inference is growing exponentially, and the launch of the Rubin platform is perfectly timed. With our R&D pace of iterating an AI supercomputer every year and the collaborative optimized design of six new chips, the Rubin platform represents a pivotal step forward for the development of AI technology."

Feynman Architecture: The Hidden Focus of GTC 2026

In addition to the Vera Rubin architecture, another suspenseful topic of high market interest is whether NVIDIA will provide a static display of early samples of the Feynman architecture, originally scheduled for release in 2028, at this GTC conference.

NVIDIA founder Jensen Huang previously hinted that his keynote would showcase "never-before-seen" technologies, a statement that has led investors to anticipate that a new round of product iteration cycles and key supply chain choices are about to be confirmed, particularly strategic trade-offs in advanced process nodes and packaging forms.

According to previous reports, the Feynman architecture will utilize TSMC's (2330) A16 1.6nm process technology and introduce silicon photonics for the first time, using optical signals instead of traditional electrical signals to transmit data.

Analysis firm Wccftech believes that if Feynman does indeed adopt the TSMC A16 process, NVIDIA will become the first—and potentially only—customer for large-scale mass production in the early stages of this node. This would deeply link market expectations for TSMC's A16 capacity ramp-up and yield improvements with NVIDIA's product cadence, further strengthening its influence in the advanced process sector.

TSMC ( TSM) A16 1.6nm node is considered a major leap in semiconductor manufacturing, with its core technical highlight being the Super Power Rail (SPR) architecture, referred to by the industry as the "world's smallest node technology."

Wccftech points out that NVIDIA will be the core customer for the initial mass production of the A16 node, whereas mobile customers may require more time to adapt to this process standard due to the need for deep architectural overhauls. This means that the utilization and introduction pace of early A16 capacity will revolve significantly around NVIDIA's product strategy.

Beyond the generational leap in manufacturing processes, the Feynman architecture is also linked to another potential clue, with some analysis speculating it might integrate Groq's LPU (Language Processing Unit) hardware stack for the first time. The core rationale behind these discussions is that latency is becoming one of the key performance metrics for AI computing vendors, particularly in scenarios such as real-time inference and conversational AI, where low-latency capabilities directly impact user experience.

Market speculation suggests that NVIDIA might adopt a "hybrid bonding" technology path, incorporating LPU units as an on-package option, a method compared to AMD's X3D processors.

However, Wccftech also noted that such integration would significantly increase chip design and production difficulty, meaning that even if the technical direction is clear, the implementation pace could still be constrained by engineering complexity and manufacturing maturity, thereby affecting the mass production timeline.

NVIDIA's Key Role

Current market sentiment has shifted significantly from the past belief that AI investment would inevitably yield returns toward a more cautious stance. Investor interest in the long-term strategy of "investing heavily first and waiting for future returns" has declined, with focus turning toward AI business models capable of achieving profitability in the near term.

Since the second half of 2025, a cloud of AI anxiety has lingered over overseas capital markets. From its stock price peak in late October 2025 to the present, NVIDIA has seen a cumulative decline of over 11%, a volatility that fully reflects market divergence and concerns regarding the prospects of the AI industry.

NVIDIA founder Jensen Huang has previously stated publicly that the "AI doomsday" rhetoric promoted by some is negatively impacting the global tech industry, even deterring companies and investors who originally intended to enter the AI space.

He believes the AI field is currently undergoing a "war of narratives": one side views the prospects of AI development as bleak and filled with unknown risks and challenges, while the other remains optimistic about the future of AI, firmly believing it will drive human progress.

Huang admitted that while simply dismissing either side would be too absolute, those extremely pessimistic narratives are tangibly affecting market confidence and investment decisions.

If NVIDIA can deliver a satisfactory performance at this GTC conference, proving that its expensive computing clusters are not just cost centers for cloud service providers but core engines capable of driving substantial revenue growth for enterprise customers, then the current market volatility will serve as a turning point for a new round of steady growth.

Investors and industry observers are closely monitoring NVIDIA's performance, as it not only represents the developmental direction of the AI computing sector but is also directly linked to the confidence trajectory of the entire AI industry.

Recommended Articles

Comments (0)

Click the $ button, enter the symbol, and select to link a stock, ETF, or other ticker.