RPT-BREAKINGVIEWS-AI boom is infrastructure masquerading as software

View all comments(0)

Disclaimer: The information provided on this website is for educational and informational purposes only and should not be considered financial or investment advice.

Like

Sign up for Tradingkey to unlock the full content

Free signup

Recommended Articles

Featured Tools

Top News

Micron Technology Stock Outlook: Can MU Stock Rally Above $1,000 in 2026?

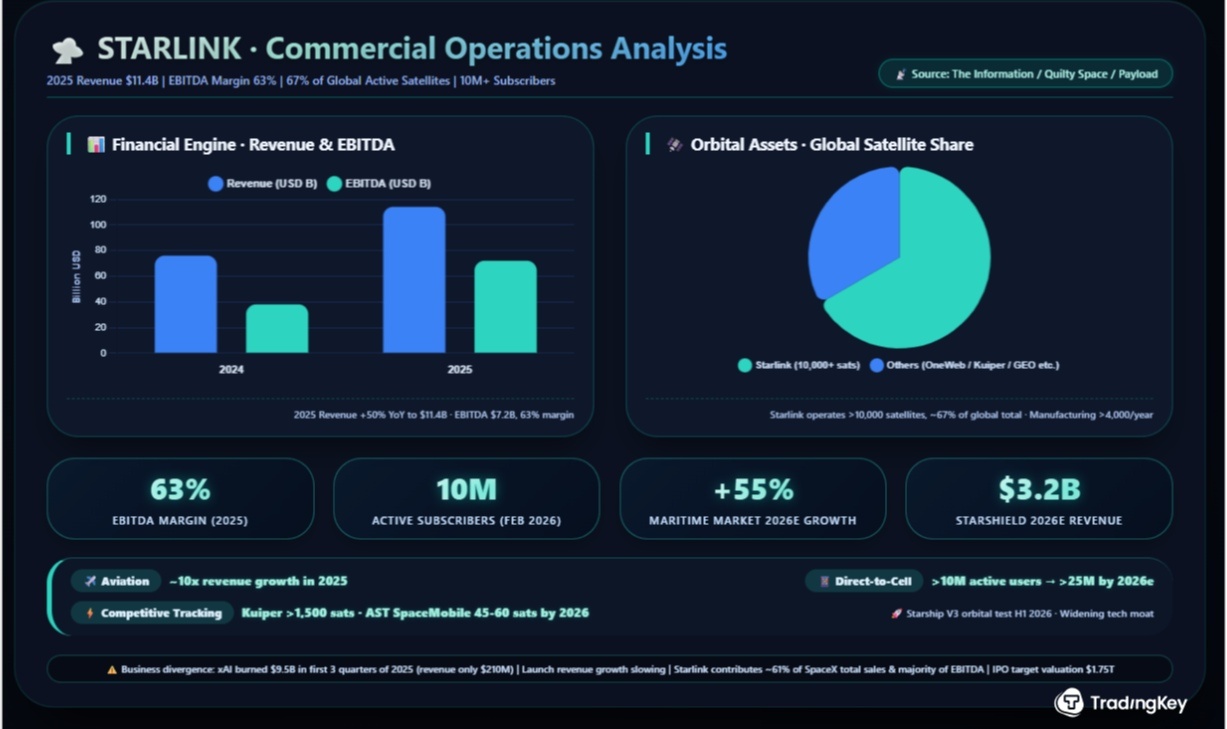

SpaceX IPO Date Set for June 12 at a $1.75 Trillion Valuation - Everything You Need to Know About SPCX

Seagate Shares Tumble Nearly 7% Monday, Dragging Down Memory Chip Sector; Is the Memory Chip Sector Set for a Major Correction?

Could Microsoft Stock Reach $1,000? A Complete 2026-2030 Forecast and Evaluation

Gold Prices Drop Below $4,500, Gold Prices May Fall to $4,360 This Week

Comments (0)

Click the $ button, enter the symbol, and select to link a stock, ETF, or other ticker.