Artificial Intelligencer-Anthropic courted the Pentagon. Here's why it walked away

By Deepa Seetharaman

March 4 (Reuters) - (Artificial Intelligencer is published every Wednesday. Think your friend or colleague should know about us? Forward this newsletter to them. They can also subscribe here or email me to share any thoughts.)

For AI lab Anthropic, losing a Pentagon contract has coincided with a spectacular surge in popularity.

In the wake of a public clash with the U.S. government, the company's chatbot Claude has topped the Apple App Store and its annualized revenue pace has shot up to $19 billion, from $14 billion just weeks ago.

Supporters of the company have scrawled admiring messages in chalk outside its headquarters. One read: “God loves Anthropic.”

The AI research lab, which CEO Dario Amodei cofounded five years ago, has staked its reputation on being safer than other AI companies. In recent months, the company has clashed with the Pentagon over Amodei’s refusal to compromise on two issues: domestic surveillance and autonomous weapons.

Don’t mistake Amodei for a pacifist. More than any other lab, Anthropic aggressively courted the U.S. national security apparatus. In an interview with CBS, Amodei said he wasn’t opposed to AI-enabled weapons – just that today’s AI systems weren’t up to the task.

Many investors worry about the long-term effects of the Pentagon spat on Anthropic’s business, especially if the Pentagon declares the company a “supply-chain risk.”

The dispute has alarmed Anthropic's investors. Amodei has discussed the matter with major backers, including Amazon AMZN.O CEO Andy Jassy, two people said. Other investors have reached out directly to the Trump administration about the tensions. The discussions center on avoiding a broader ban on Anthropic's AI for all Pentagon contractors.

As my colleagues Krystal Hu, Jeffrey Dastin and I show in a new exclusive on Wednesday, some investors have been disappointed that Amodei let the problem get this far. They argue that this issue is as much about style as it is about substance. More on that below.

OUR LATEST REPORTING IN TECH AND AI:

Exclusive-Anthropic investors push to de-escalate Pentagon clash over AI safeguards, sources say

Trump to meet tech giants on AI energy pledge ahead of midterms

Defense contractors, like Lockheed, seen removing Anthropic's AI after Trump ban

Intel board chair Frank Yeary to depart after 17 years

AI may be creating instead of destroying jobs for now, ECB blog argues

Alibaba's Qwen AI division head becomes latest exec to leave this year

ANTHROPIC VERSUS PENTAGON: ‘AN EGO AND DIPLOMACY PROBLEM’

Few executives have spent as much time warning the public about the technology’s potential downsides as Dario Amodei.

His company, Anthropic, strikes an equally cautious tone about AI and the limits of humans to control its actions. Its researchers regularly publish papers about the ways its systems try to cheat and deceive humans.

At the same time, Anthropic is eager to help the war effort.

In late 2024, Anthropic struck a deal to make its technology available through Palantir's PLTR.O products. By mid-2025, it had signed a $200 million Pentagon contract and announced Claude models tailored for military use, including handling classified materials.

For Amodei, there is nothing contradictory about the two stances. The right technical safeguards and strict user policies can minimize risk.

This, however, rubbed those negotiating opposite Anthropic the wrong way. Many people close to the situation say that stylistic differences – perhaps even more than substantive ones – intensified the problems between the company and the Pentagon.

As one person put it, “It's an ego and diplomacy problem."

Tensions between Anthropic and the Pentagon have been escalating for months. In talks with Defense Department officials, Anthropic stressed its usage policies and red lines. Some at the Pentagon felt the company was telling the government how to do its job, people familiar with the matter said.

Pentagon officials also grew frustrated by safety restrictions embedded in Claude that limited its use in war-gaming scenarios. In those cases, Claude would simply refuse to engage in a particular exercise – a problem from the military’s perspective. Officials say they want to use generative AI technology to protect Americans.

Emil Michael, the Pentagon's point person on the negotiations, argued Anthropic's technology should be treated like any other software tool, such as Microsoft Excel. He and others at the Pentagon say they should be bound only by U.S. law, not company usage policies.

The stakes are significant. Anthropic's enterprise sales make up roughly 80% of its revenue, and its projected annual revenue run rate has reached about $19 billion. The success of a widely anticipated IPO hinges on that momentum continuing.

For now, some talks between Anthropic and the Pentagon are continuing, one person said, though the terms remain unclear.

CHART OF THE WEEK

By Krystal Hu, Technology Correspondent

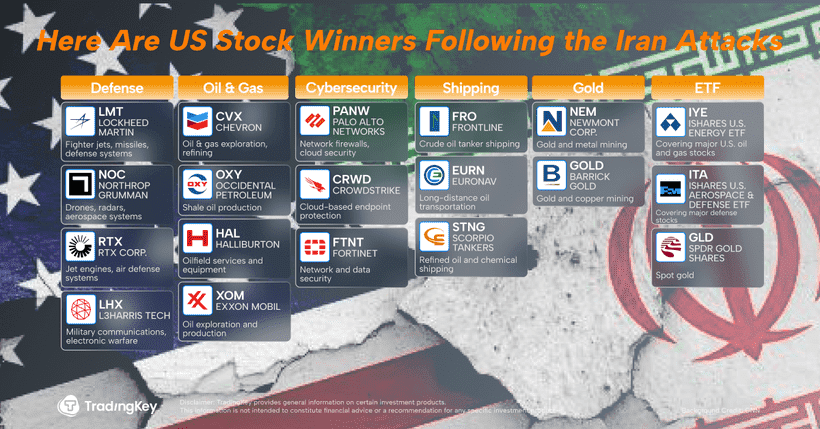

As the U.S.-Israeli war on Iran escalates, the ripple effects are reaching AI and cloud infrastructure. This week, Amazon said its AWS data centers in the UAE and Bahrain were damaged by drone strikes, underscoring how physical conflict can intersect with the technology infrastructure underpinning AI. The chart shows major providers — Amazon, Microsoft MSFT.O Azure, Google Cloud and Oracle ORCL.N Cloud — have established or expanded data center footprints across the region, from Saudi Arabia and the UAE to Qatar, Bahrain and Israel. In total, Big Tech has committed more than $30 billion to build out cloud and AI infrastructure across the Middle East, according to a Reuters estimate.

This buildout reflects broader Middle Eastern efforts to attract foreign capital and diversify economies through technology, semiconductors and AI. But the recent strikes highlight a new layer of risk: geopolitics matters for AI infrastructure too. As nations position themselves as AI hubs, their ability to protect and sustain that infrastructure in the face of regional conflict could increasingly influence where companies choose to invest, and how resilient those investments prove to be.