Nvidia bets on AI inference as chip revenue opportunity hits $1 trillion

By Stephen Nellis and Max A. Cherney

SAN JOSE, California, March 16 (Reuters) - Nvidia NVDA.O said the revenue opportunity for its artificial intelligence chips may reach at least $1 trillion through 2027, as the company outlined a strategy to compete more aggressively in the fast-growing market for running AI systems in real time.

CEO Jensen Huang unveiled a new central processor and an AI system built on technology from Groq - a chip startup from which Nvidia licensed technology for $17 billion in December at its annual GTC developer conference in San Jose, California.

The moves are part of Huang's bid to firm up the company's position in so-called inference computing, the process of answering queries, where its graphics processors face greater competition from central processing units and custom processors built by the likes of Google. Nvidia chips have dominated the process of AI model training, which has been the focus of recent years.

"The inference inflection has arrived," Huang said. "And demand just keeps on going up," he added.

Dressed in his signature black leather jacket, Huang was speaking at a hockey arena with a capacity of more than 18,000 at the four-day conference that has become one of the biggest showcases of AI technology. "I just want to remind you, this is a tech conference," he told the audience.

But after a dazzling rally that made Nvidia the first company to hit a $5 trillion valuation last October, doubts have risen about its growth. Investors have also questioned if its plan of plowing back profits into the AI ecosystem will pay off. Huang's comments allayed some fears.

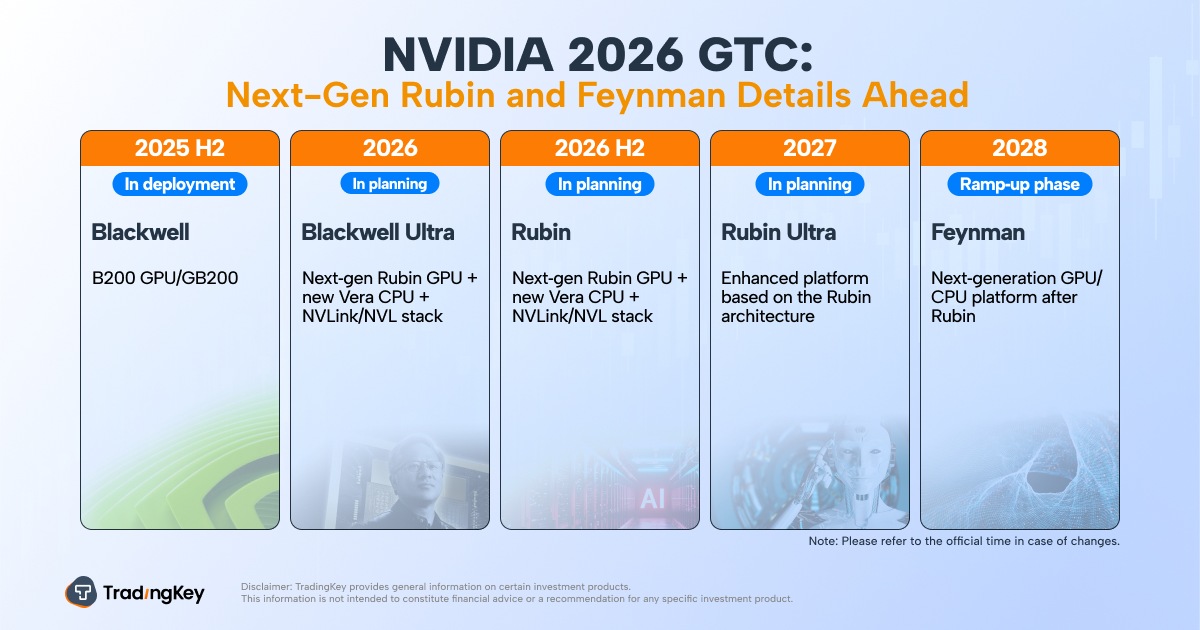

The $1 trillion forecast is up from the $500 billion revenue opportunity through 2026 that Nvidia cited for its Blackwell and Rubin AI chips on its last earnings call in February.

Shares of Nvidia briefly jumped on the new forecast but pared those gains to close up 1.2%.

"Huang mapping out a $1 trillion opportunity through 2027 underscores the durable demand for Nvidia’s AI infrastructure despite investor concerns," Emarketer analyst Jacob Bourne said.

"It signals Nvidia is sustaining its leadership in the AI chip market while the overall AI industry expands beyond early experimentation into large-scale deployment."

INFERENCE BOOM

Huang said that inference, where AI systems answer questions or carry out tasks, will be split up into two steps.

Nvidia's Vera Rubin chips will handle a first step called "prefill," where the user's request is transformed from human words into the language of "tokens" that AI computers use.

Groq's new chips will handle a second "decode" stage where the AI computer provides the answer the user is looking for.

After spending hundreds of billions of dollars in recent years on chips for training their AI models, companies such as OpenAI, Anthropic and Meta META.O are shifting toward serving hundreds of millions of users who are tapping those AI systems.

That is also driving demand for CPUs - which are dominated by Intel and are increasingly seen as a viable alternative to graphics processors from Nvidia for deploying AI models.

"We are selling a lot of CPU standalone," Huang said as he unveiled the new Vera CPU. "This is already for sure going to be a multi-billion-dollar business for us," he added.

Huang also showed off the company's Feynman roadmap but offered few details beyond a list of the various chips Nvidia plans to include in the platform, including AI processors and several networking chips. The Feynman architecture is expected in 2028, following the company's Rubin Ultra chips.

The company is also targeting the market for autonomous AI agents with NemoClaw, which integrates with the viral OpenClaw platform to add privacy and safety controls to the tool that can autonomously execute a wide range of tasks with minimal human guidance and has generated global buzz.

"It's kind of upleveled the entire discussion. It's up leveled the entire thought of how they do infrastructure," said Technalysis Research president Bob O'Donnell, referring to the announcements.

"He (Huang) used to come out with a new GPU chip and say, look, here's my new chip. Now he's got, you know, five racks of equipment that make up these systems."