Micron Earnings Preview: What can the Current Memory Supply Picture Tell Us?

The AI memory market faces a structural shortage driven by surging DRAM, HBM, and NAND demand for AI training and inference. HBM, in particular, consumes three times more wafers than traditional DRAM, with limited new capacity expected until 2028-2029. Micron (MU) forecasts significant Q2 2026 revenue and EPS growth with high margins, but its flat 2026 capacity, coupled with competitors Samsung and SK Hynix expanding, poses risks. Potential bottlenecks for MU could lead to market share loss and technological lag, despite its attractive valuation.

The Current Memory Economics

Memory has been among the hottest investment themes related to AI. Demand for DRAM, HBM and NAND memory is growing, primarily driven by both training and inference. This is especially true for HBM, where demand is expected to be growing at ~30% CAGR for the coming years.

Why is the supply situation so tight? The answer is basically a perfect storm of tailwinds.

GPU giants like Nvidia and AMD need HBM for their products, driving the demand up. HBM is a relatively new technology, and it doesn’t have a secondary market like traditional DRAM and NAND. Thus, the original production of DRAM has increasingly been allocated towards HBM. The thing is, HBM consumes 3x more wafers than non-HBM DRAM, and as production is being shifted from DRAM to HBM, this drives DRAM supply down and prices up. All this tight supply is also exacerbated by the fact that it takes around two years to build a memory chip production plant from scratch.

On the NAND side, things are not much better, as suppliers are converting NAND production facilities into DRAM and withholding NAND supply to jack up prices. Samsung and SK Hynix have reduced their NAND wafer production by roughly 5% to 10% in 2026 compared to 2025.

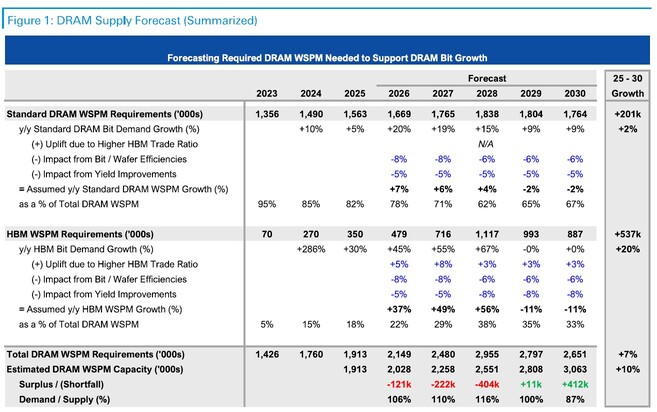

Industry-wise, we may see meaningful supply improvements in 2028-2029 onwards, as the planned production plants roll into operation.

Source: Deutsche Bank

The market already agrees that this is not a typical cycle but more of a long-term structural change. We cannot deny the enormous financial boost for players like Micron (MU).

For Q2 2026, MU expects +137% revenue growth and +450% EPS growth. These are peak NVDA numbers. Not to mention the margin reaching nearly 70% from the previous high-30s %, due to the enormous pricing power MU has.

Metric | Q2 2025 (Actual) | Q2 2026 (Consensus) | YoY Change (%) |

Revenue | $8.05 Billion | ~$19.07 Billion | +137% |

Non-GAAP EPS | $1.56 | ~$8.58 | +450% |

Gross Margin | 37.9% | ~68.5% | +3,060 bps |

But What Can Go Wrong?

Micron’s capacity for HBM in 2026 is already full, but we cannot say the same for Samsung and SK Hynix. MU recently discontinued its consumer-facing Crucial brand to move every possible wafer into the AI/Enterprise segment. There is very little “unallocated” silicon left to shift. Micron’s total DRAM wafer capacity is expected to remain flat at ~360k wafers/month for the 2026 calendar year. They have two plants under construction, which will start to produce in 2027.

- Bise, Idaho (USA) - first output expected H1 2027

- Tongluo (Taiwan) - first output expected H2 2027

The situation is different for Samsung (which has plenty of floor space to utilize and upside in the production yields). SK Hynix has reached a high level of yields, but just like Samsung, it still can convert free capacity (Cheongju, Korea).

Company | 2025 Capacity (Wafers/Mo) | 2026 Capacity (Wafers/Mo) | Net Change (%) |

Samsung | 759,000 | 793,000 | +4.5% |

SK Hynix | 597,000 | 648,000 | +8.5% |

Micron | 360,000 | 360,000 | 0.0% |

Considering the high demand and the ability to increase unit prices, capacity constraints may not be a current issue, but if MU production faces a bottleneck, then it means MU may lag the other two competitors.

In a commoditised industry like memory, this sounds like the perfect problem to have – limited supply gives a lot of bargaining power to negotiate higher pricing; however, there are quite a few problems with this logic.

- Capped revenue, cannot sell more even if they want to, thus the revenue growth will only depend on price increases.

- MU risks losing market share, stuck in a supply bottleneck as SK Hynix and Samsung lock in lucrative contracts.

- Lack of “spare room” may prevent MU from further developing a new generation of HBM products, making MU lag technologically. As Nvidia develops new configurations every 2-3 years, all three memory giants are under pressure to constantly innovate

In March 2026, there are rumors that NVDA may prioritize SK Hynix and Samsung in their Rubin production and that Micron has reportedly been moved to the “Rubin CPX”—a mid-range, inference-oriented accelerator. The reason this matters is the fact that Nvidia is the most important client for HBM memory, as they represent nearly 70% of the demand.

Other Structural Advantages of SK Hynix and Samsung against MU

Apart from more capacity space, SK Hynix and Samsung may have some other advantages.

Samsung, for example, is more integrated. It has a memory fab and the packaging house, which comes with supply chain advantages, as it doesn’t need to outsource. On the other end, SK Hynix has a much deeper-rooted relationship with TSMC, which may help TSMC prioritize the Korean giant over Micron.

In terms of government help, MU is receiving generous help from the US government, but both SK Hynix and Samsung are 1) more focused in Korea (MU is more global in its supply chain) and 2) the Korean government is investing heavily in physical infrastructure (grid, etc). Also, with lower electricity costs and lower labor salaries, it can be 20-30% cheaper to operate a plant in Korea than in the United States.

Final Words

MU is still very attractive from a valuation point of view, with a forward PE of just 13.79 but 4-5x growth in earnings. Again, these are Nvidia numbers, but even Nvidia trades at a much higher PE.

However, the tight supply has already been largely priced in. Now, what is needed for MU to further go up is to show that there is even more demand for memory beyond 2028.

Also, when it comes to MU in the context of the other two peers, Samsung and SK Hynix, MU’s limited space to improve supply and optimize its supply chains may cause a divergence in performance. Currently, we don’t see that, but in a normalized supply-demand dynamic in the future, there may not be all winners among these three.

Recommended Articles